Is this better colored in?

“Computers will understand sarcasm before Americans do.”

The above quote is from "The great AI awakening" a NYT history of how Google implemented a machine learning approach to significantly improve its translation service. Your phone is now very close to being a babel fish realtime translator. Will Americans soon be reading Japanese poetry, listening to Senegal music, Swedish TV, and understanding news from the BBC? There are now babel fish like earpieces and one can imagine soon to be ubiquitous devices like apple's AirPods connecting audio, microphones, and computation directly to your ear for everyday usage. How soon can we use this translation service to break down the age old language barrier?

Challenges for the future:

- Read a website/book in Japanese?

- Listen to French Radio translated in realtime?

- Watch Swedish TV translated in realtime?

- Actually talk to someone who speaks a different language in real time?

We do of course need to make this an everyday part of our lives, but we should also be reminded of Douglas Adams's tongue in cheek warning when describing the Babel fish in the Hitchhikers Guide to the Galaxy: 'Meanwhile the poor Babel fish, by effectively removing all barriers to communication between different cultures and races, has caused more and bloodier wars than anything else in the history of creation.'

...

In the spirit of avoiding link rot I have put a few choice paragraphs below, but the original is much longer, and worth a read:

.....The second half of Rekimoto’s post examined the service in the other direction, from Japanese to English. He dashed off his own Japanese interpretation of the opening to Hemingway’s “The Snows of Kilimanjaro,” then ran that passage back through Google into English. He published this version alongside Hemingway’s original, and proceeded to invite his readers to guess which was the work of a machine.

NO. 1:

Kilimanjaro is a snow-covered mountain 19,710 feet high, and is said to be the highest mountain in Africa. Its western summit is called the Masai “Ngaje Ngai,” the House of God. Close to the western summit there is the dried and frozen carcass of a leopard. No one has explained what the leopard was seeking at that altitude.

NO. 2:

Kilimanjaro is a mountain of 19,710 feet covered with snow and is said to be the highest mountain in Africa. The summit of the west is called “Ngaje Ngai” in Masai, the house of God. Near the top of the west there is a dry and frozen dead body of leopard. No one has ever explained what leopard wanted at that altitude.

Even to a native English speaker, the missing article on the leopard is the only real giveaway that No. 2 was the output of an automaton. Their closeness was a source of wonder to Rekimoto, who was well acquainted with the capabilities of the previous service. Only 24 hours earlier, Google would have translated the same Japanese passage as follows:

Kilimanjaro is 19,710 feet of the mountain covered with snow, and it is said that the highest mountain in Africa. Top of the west, “Ngaje Ngai” in the Maasai language, has been referred to as the house of God. The top close to the west, there is a dry, frozen carcass of a leopard. Whether the leopard had what the demand at that altitude, there is no that nobody explained.

Rekimoto promoted his discovery to his hundred thousand or so followers on Twitter, and over the next few hours thousands of people broadcast their own experiments with the machine-translation service. Some were successful, others meant mostly for comic effect. As dawn broke over Tokyo, Google Translate was the No. 1 trend on Japanese Twitter, just above some cult anime series and the long-awaited new single from a girl-idol supergroup. Everybody wondered: How had Google Translate become so uncannily artful?

....

Pichai was in London in part to inaugurate Google’s new building there, the cornerstone of a new “knowledge quarter” under construction at King’s Cross, and in part to unveil the completion of the initial phase of a company transformation he announced last year. The Google of the future, Pichai had said on several occasions, was going to be “A.I. first.” What that meant in theory was complicated and had welcomed much speculation. What it meant in practice, with any luck, was that soon the company’s products would no longer represent the fruits of traditional computer programming, exactly, but “machine learning.”

A rarefied department within the company, Google Brain, was founded five years ago on this very principle: that artificial “neural networks” that acquaint themselves with the world via trial and error, as toddlers do, might in turn develop something like human flexibility.

....

As of the previous weekend, Translate had been converted to an A.I.-based system for much of its traffic, not just in the United States but in Europe and Asia as well: The rollout included translations between English and Spanish, French, Portuguese, German, Chinese, Japanese, Korean and Turkish. The rest of Translate’s hundred-odd languages were to come, with the aim of eight per month, by the end of next year. The new incarnation, to the pleasant surprise of Google’s own engineers, had been completed in only nine months. The A.I. system had demonstrated overnight improvements roughly equal to the total gains the old one had accrued over its entire lifetime.

Pichai has an affection for the obscure literary reference; he told me a month earlier, in his office in Mountain View, Calif., that Translate in part exists because not everyone can be like the physicist Robert Oppenheimer, who learned Sanskrit to read the Bhagavad Gita in the original. In London, the slide on the monitors behind him flicked to a Borges quote: “Uno no es lo que es por lo que escribe, sino por lo que ha leído.”

Grinning, Pichai read aloud an awkward English version of the sentence that had been rendered by the old Translate system: “One is not what is for what he writes, but for what he has read.”

To the right of that was a new A.I.-rendered version: “You are not what you write, but what you have read.”

.....

The theoretical work to get them to this point had already been painstaking and drawn-out, but the attempt to turn it into a viable product — the part that academic scientists might dismiss as “mere” engineering — was no less difficult. For one thing, they needed to make sure that they were training on good data. Google’s billions of words of training “reading” were mostly made up of complete sentences of moderate complexity, like the sort of thing you might find in Hemingway. Some of this is in the public domain: The original Rosetta Stone of statistical machine translation was millions of pages of the complete bilingual records of the Canadian Parliament. Much of it, however, was culled from 10 years of collected data, including human translations that were crowdsourced from enthusiastic respondents. The team had in their storehouse about 97 million unique English “words.” But once they removed the emoticons, and the misspellings, and the redundancies, they had a working vocabulary of only around 160,000.

Then you had to refocus on what users actually wanted to translate, which frequently had very little to do with reasonable language as it is employed. Many people, Google had found, don’t look to the service to translate full, complex sentences; they translate weird little shards of language. If you wanted the network to be able to handle the stream of user queries, you had to be sure to orient it in that direction. The network was very sensitive to the data it was trained on. As Hughes put it to me at one point: “The neural-translation system is learning everything it can. It’s like a toddler. ‘Oh, Daddy says that word when he’s mad!’ ” He laughed. “You have to be careful.”

More than anything, though, they needed to make sure that the whole thing was fast and reliable enough that their users wouldn’t notice. In February, the translation of a 10-word sentence took 10 seconds. They could never introduce anything that slow. The Translate team began to conduct latency experiments on a small percentage of users, in the form of faked delays, to identify tolerance. They found that a translation that took twice as long, or even five times as long, wouldn’t be registered. An eightfold slowdown would. They didn’t need to make sure this was true across all languages. In the case of a high-traffic language, like French or Chinese, they could countenance virtually no slowdown. For something more obscure, they knew that users wouldn’t be so scared off by a slight delay if they were getting better quality. They just wanted to prevent people from giving up and switching over to some competitor’s service.

.....

By late spring, the various pieces were coming together. The team introduced something called a “word-piece model,” a “coverage penalty,” “length normalization.” Each part improved the results, Schuster says, by maybe a few percentage points, but in aggregate they had significant effects. Once the model was standardized, it would be only a single multilingual model that would improve over time, rather than the 150 different models that Translate currently used. Still, the paradox — that a tool built to further generalize, via learning machines, the process of automation required such an extraordinary amount of concerted human ingenuity and effort — was not lost on them. So much of what they did was just gut. How many neurons per layer did you use? 1,024 or 512? How many layers? How many sentences did you run through at a time? How long did you train for?

“We did hundreds of experiments,” Schuster told me, “until we knew that we could stop the training after one week. You’re always saying: When do we stop? How do I know I’m done? You never know you’re done. The machine-learning mechanism is never perfect. You need to train, and at some point you have to stop. That’s the very painful nature of this whole system. It’s hard for some people. It’s a little bit an art — where you put your brush to make it nice. It comes from just doing it. Some people are better, some worse.”

By May, the Brain team understood that the only way they were ever going to make the system fast enough to implement as a product was if they could run it on T.P.U.s, the special-purpose chips that Dean had called for. As Chen put it: “We did not even know if the code would work. But we did know that without T.P.U.s, it definitely wasn’t going to work.” He remembers going to Dean one on one to plead, “Please reserve something for us.” Dean had reserved them. The T.P.U.s, however, didn’t work right out of the box. Wu spent two months sitting next to someone from the hardware team in an attempt to figure out why. They weren’t just debugging the model; they were debugging the chip. The neural-translation project would be proof of concept for the whole infrastructural investment.

...

What they had shown, Dean said, was that they could do two major things at once: “Do the research and get it in front of, I dunno, half a billion people.”

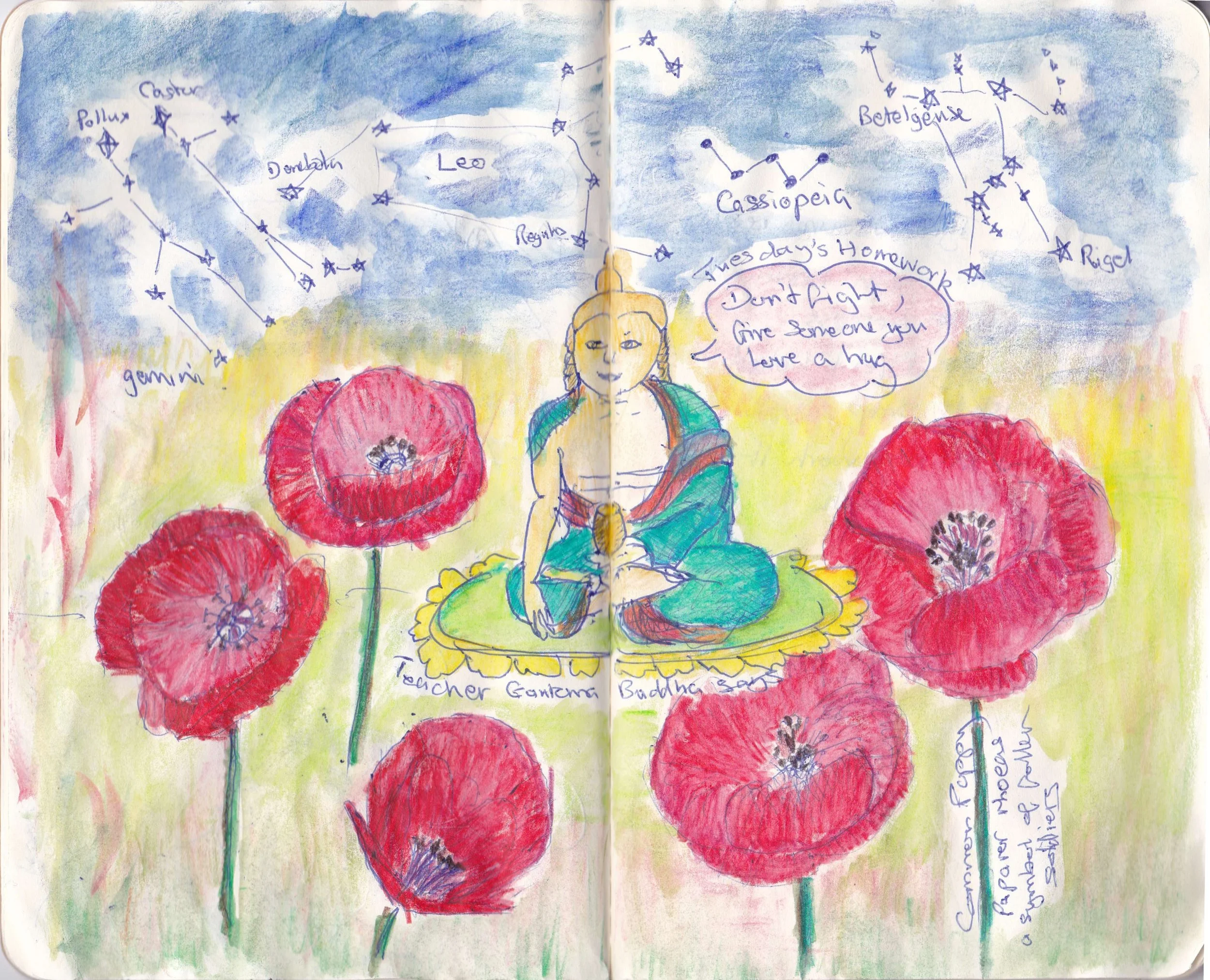

Julian vs. the Homework - Roughest Draft now out!

The very first draft of ideas for Julian vs. the Homework (JvH) is now here - read the book before it is even really started! The Fighting Homework link will stay at the top so you can see it at any time, and I will add updates here as changes to various pages are made. ALL COMMENTS AND SUGGESTIONS ARE WELCOME - I LOVE RED INK!

Learning Stats 1: Republicans lie way more than Democrats (p-value 0.0007)

In this new internet world we can look at people statements and try to use that knowledge to see if who is trying to lie to us, con us, hurt us or take are money. If we find such people or organizations it is not always clear what to do, but we can vote for a different party, or buy from a different company. In the internet world it's pretty easy to get the data as it floats around.

Here is a dataset from the New York TImes, culled from politifact, (which I personally think obsess a little on provable details to the expense of big important lies). lets look at the chart:

Obviously some of the names at the bottom with the least lies are the Democrats, and the names at the top with the most lies are Republicans. But the NYT often succumbs to views of the shape of the earth differ reporting, and so didn't label the parties of the politicians, and titled the article "All Politicians Lie. Some Lie More Than Others." So lets do some labeling and see how it differs by party* (csv file here) and put it into R studio to quantify this. (Thanks to the internet that is now your external brain, and Mike Marin posting new memories for you on youtube here is a nice video to tell you how)

> t.test(Which_party_lies$Mostly.False.and.Worse ~ Which_party_lies$Party, mu=0, alternative = "two.sided", conf=0.95, var.equal = F, paired = F)

Welch Two Sample t-test

data:Which_party_lies$Mostly.False.and.Worse by Which_party_lies$Party

t = -4.5566, df = 11.802, p-value = 0.0006868

alternative hypothesis: true difference in means is not equal to 0

95 percent confidence interval:

-36.48342 -12.84991

sample estimates:

mean in group D mean in group R

26.3333351.00000

i.e The null hypothesis is that party identification has no impact on lying is rejected with a p-value of 0.0007. - Party identification has an impact on how often politicians lie.

Which party lies more, and how much more? If the above table didn't convince you, a quick plot makes it obvious

> boxplot(Which_party_lies$Mostly.False.and.Worse ~ Which_party_lies$Party, main = "% Mostly False and Worst Lies by US Political Party", ylab = "% Statements Mostly False and Worse", xlab = "Party Affliation", col = c("blue", "red"))

Sometimes some colors make it a bit more obvious... Republicans lie on average of those looked at > half of the time, Democrats only about a quarter of the time, with a corresponding increase in the committment to truth.

One last thing - how much does our new president lie? Donald Trump had 69% of 342 statements labelled mostly false or worse. With this we can now help the NYT redo their headline: "All Politicians lie, but Republicans lie most, (p-value 0.0007) lying greater than half the time, with President elect Trump lying 69% of the time, so don't trust Mr Trump or other Republicans when they say anything."

Notes:

- When typing in the data I almost made a mistake on Ben Carson as he has 0 truthful statements which meant I got all my data in the wrong column the first time.

- Martin O'Malley is interesting in that he (although solidly in the democrats don't lie much camp) is the only person here with no true comments, and no pants on fire, so I presume he is being too political to say a fact straight?- Come on Martin, tell us how it is!

- Here is a link to the csv file

A New Mathematics for the Million?

So I have to admit that I didn't take "A-level" Maths (plural in the UK, but over time I have come to better appreciate it being singular in the US like "sheep") and so I never really had a great preparation in mathematics. Over the years I tried various books to learn calculus and failed numerous times, but eventually succeeded finding it wonderful and basically fun at Khan Academy!. I am now forcing my way through the Linear Algebra with less success - but I think that might be as much about the subject matter being just a little harder to apply. I also found a book hanging around the house by Lancelot Hogben - Mathematics for the Million - which is quite good, but I am not sure that I recommend it. It is easier to learn calculus from youtube videos than from this book, but in general it has an infectiousness that is pleasing.

This has had me thinking for a long time what the mathematics the everyman should want to know. Practical things, useful things, a better understanding of finance, how machines are now making decisions for us. I think this list of things that everyone needs to know - although perhaps they don't need to know how to do - has changed dramatically in the last two decades, and is rapidly changing now - to the point where perhaps someone who doesn't want to be a mathematician maybe doesn't care about the proofs. It is also best taught via applied mathematics - i.e. the course should be a set of fascinating problems - so you never hear the question - "Why is this interesting again?"

So what is the list:

- Python, NumPy, Pandas ect - this is the new place to do math

- Calculus - just the basic stuff to realize that it is fun.

- The fun parts of differential equations - making model equations and differentiating to get insight

- Bayesian stats - which I think are now best learned as part of machine learning

- Linear algebra as a part of machine learning

- Modelling - how everything is being made aerodynamic now

- Finite element analysis?

What do you think should be on the list?

I want to gradually improve this post by adding the links to where to lear about these things.

Our new fangled Library of Alexandria: Mr Money Mustache.

If only there was some way to access the worlds knowledge in a handy portable device....

So one thing I want to do here is collect other peoples wisdom in one place, so we don't all have to go google it ourselves every time. Self help books often give lots of good advice, mixed in with even more advice that kind of dilutes it all, or perhaps just sounded sexy enough that someone might just buy the book.

When I was a child, people would say things like "If your so smart, why aren't you rich?" Its a good question, and now we all have a connection to the worlds knowledge in our pockets. So why aren't we all rich. Well historically speaking we all are if you compare us to caveman, but I think a better answer is that we haven't read enough Mr Money Mustache (Meet him here). There is a lot there, but how about starting with three pages: 1. An illuminating graph about the amount you save and invest as a percentage of your take home pay, and how long before you don't need to work anymore (although you might want to if it is fun). Note the difference in time to "do what you want" from saving 15% (43 years), 30% (28 years) and 45% (19 years). Second he promotes the magic of bicycles, which are just amazing. Finally, you should see the talk "How to be happy, rich and save the world" MMM gave, to get fully committed to this idea that money is far more powerful, and can be used far more efficiently than we do in everyday life.

Asking questions...

Hmmmmmm Turkey pot pie.

Read MoreImage test !!!

Because cream tea is very important

Read MoreI made a blog!

My son says "Don't tell everyone my real name you fool - and call you blog something a bit more clever."

My aim here is to try and understand what it means to be smart, in a world where you can look up all of the answers on the google, and machine learning is doing all the thinking faster and better every day.

My son says "Did you seriously just call it 'the google?' "

More later....